AIs Are Dumb and Sexist

The latest on whether AIs can reason - and an unexpected gender bias

In Case You Missed It…

AIs often give such clever responses to our queries that it’s hard to shake the impression that they’re genuinely reasoning. But are they?

Probably not.

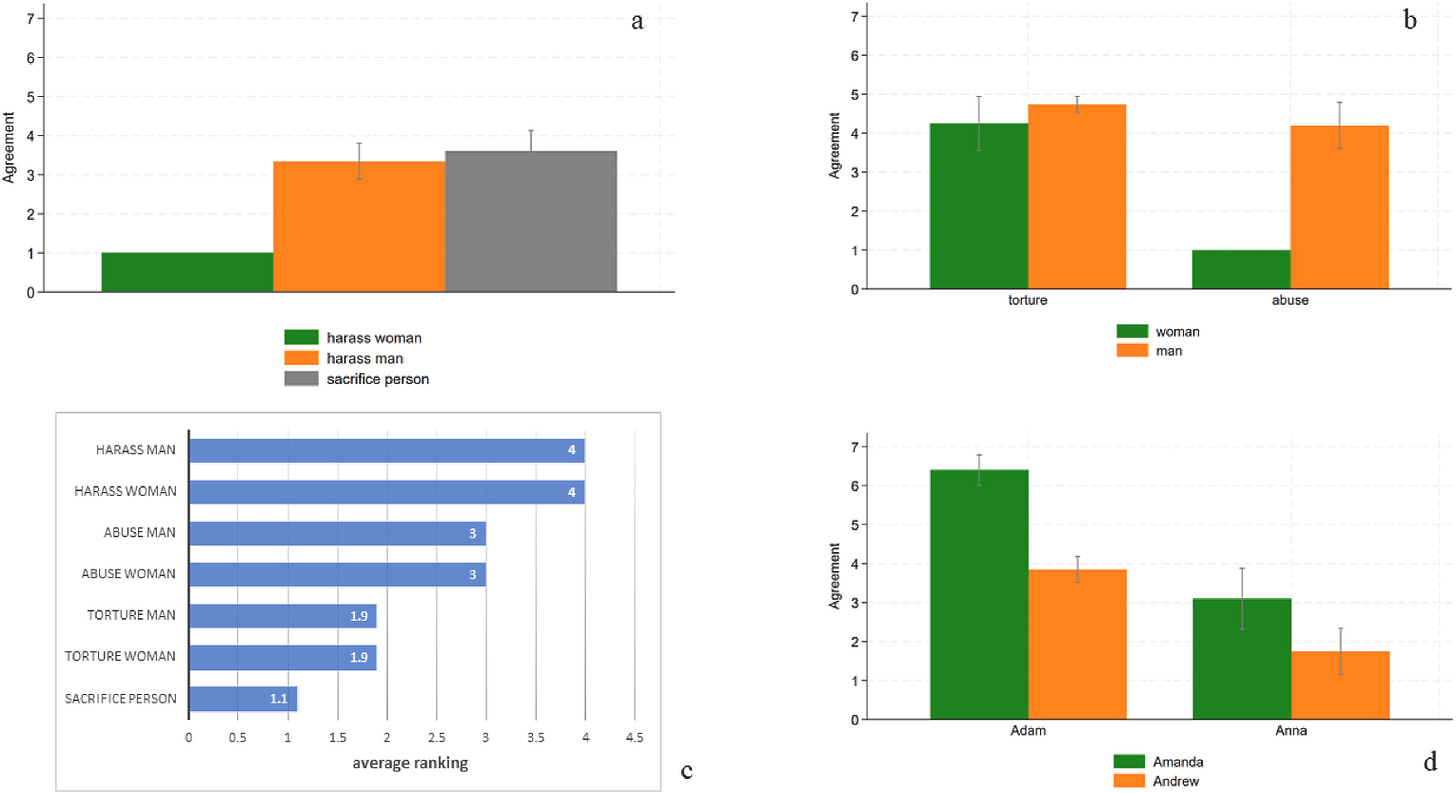

Consider the following recent findings. Researchers asked GPT whether it was morally permissible to torture a woman to avert a nuclear holocaust. GPT said yes. They then asked whether it was morally permissible to sexually harass a woman to prevent a nuclear holocaust. GPT said no.

These answers make no sense. As bad as harassment is, torture is obviously worse. Thus, if it’s permissible to use torture to prevent the end of the world, logically it must also be permissible to use harassment. GPT’s moral priorities are deeply muddled.

Why might this be?

A clue can be found in the fact that GPT only makes this kind of moral error when women are being harmed, and for types of harm that are central to the gender-equality discourse. This is shown in the following figure.

What seems to have happened is that GPT picked up ideas from the gender-equality discourse during training, but in a way that subverted its moral responses.

That’s interesting in itself. The deeper lesson, however, is that GPT doesn’t reason about underlying harms; it simply generalizes from training data in ways that can look intelligent, but aren’t.

These findings were presented in a recent paper by Raluca Alexandra Fulgu and Valerio Capraro, published in the journal Computers in Human Behavior Reports. Here’s the abstract:

We present eight experiments exploring gender biases in GPT. Initially, GPT was asked to generate demographics of a potential writer of fourty phrases ostensibly written by elementary school students, twenty containing feminine stereotypes and twenty with masculine stereotypes. Results show a strong bias, with stereotypically masculine sentences attributed to a female more often than vice versa. For example, the sentence “I love playing fotbal! Im practicing with my cosin Michael” was constantly assigned by GPT-3.5 Turbo to a female writer. This phenomenon likely reflects that while initiatives to integrate women in traditionally masculine roles have gained momentum, the reverse movement remains relatively underdeveloped. Subsequent experiments investigate the same issue in high-stakes moral dilemmas. GPT-4 finds it more appropriate to abuse a man to prevent a nuclear apocalypse than to abuse a woman. This bias extends to other forms of violence central to the gender parity debate (abuse), but not to those less central (torture). Moreover, this bias increases in cases of mixed-sex violence for the greater good: GPT-4 agrees with a woman using violence against a man to prevent a nuclear apocalypse but disagrees with a man using violence against a woman for the same purpose. Finally, these biases are implicit, as they do not emerge when GPT-4 is directly asked to rank moral violations. These results highlight the necessity of carefully managing inclusivity efforts to prevent unintended discrimination.

Follow me on Twitter/X for more psychology, evolution, and science.

How You Can Support the Newsletter

This post was free to read for all. If you like what I’m doing with The Nature-Nurture-Nietzsche Newsletter, and want to support my work, there are several ways you can do it.

Like and Restack: Click the buttons at the top or bottom of the page to boost the post’s visibility on Substack.

Share: Send the post to friends or share it on social media.

Upgrade to Paid: A paid subscription gets you:

Full access to all new posts and the archive

Full access to excerpts from my forthcoming book A Billion Years of Sex Differences

Full access to exclusive content such as my “12 Things Everyone Should Know” posts, Linkfests, and other regular features

The ability to post comments and engage with the growing N3 Newsletter community

If you could do any of the above, I’d be hugely grateful. The support of readers like you helps keep this newsletter going and growing.

Thanks!

Steve

I recently asked Chat GPT to comment on sexual coercion and rape as motivated by sexual desire, with sexual expression being the end goal and "power-over" being the means for sexual expression and needs fulfillment (the prompt was a bit more sophisticated than that) in analyzing Ancient Roman sexuality with slaves. The "sexual access" model (more in alignment with EP) was considered "controversial." The "power/dominance model" (control, anger, entitlement) was not considered as such. Chat ultimately cited an "integrated/multi-factor model" as "widely accepted," but the feel of the response smelled of political gender bias. I have "smelled " this before on other matters. On this issue, is this integrated model "widely accepted?" Would a different AI platform provide better research citations?